Metacognition is one of the most essential aspects of cognitive performance; it goes beyond the ability to decipher complex problems and rather analyzes the thought process additionally used to resolve the solutions. This can apply for the problem-solving approaches of others, for how they have come to their solutions. It’s integral to enhance your metacognitive ladder in order to regulate your own ways of thinking and speculate the ways of others Aswell, portraying a key contribution to high fluid-intelligence. Training calibration regarding metacognition: reduce overconfidence penalties and under confidence hesitation under time pressure. This trains your problem-solving structure to be more precise and conscious decision making in the right direction, with no factors of hesitation – under confidence, or a lack of focus – over confidence.

-module_id: META_CALIBRATION_LADDER_ABSTRACT-

Results Dashboard, (for guidance).

Enter these after each session. make them of use to whether advance, hold, or regress difficulty meters.

Your Metrics (enter after each session)

| Metric | Value Strain flag: 0/1 Accuracy: 0-100% Mean confidence:?/10 Calibration error, (average confidence – outcome^100): 0/10-20 Overconfidence rate, (% of wrong answers with a confidence percentage over 70%): 0-10 Level: 0-10 Outcome determined by player: Advance | maintain | regress difficulty in difficulty. | |

Key benefits of Metacognition training:

-Target system-

-Protocol:

Train for 8 to 12 minutes, 3 times a week.

Answer each of the following questions as well as rating your confidence levels.

Review 10-trial summary

Log results

Apply progression rule (advance/hold/regress)

Stop only if: eye straining and fatigue.

- Feedback thresholds on each level of confidence:

- 20% or less: arbitrary guessing.

20-40%: unsure - 40-60%: slightly confident

- 60-80: confident

- 80-95: almost certain

- 100: fully certain

(List will soon be switched into a slider within 2 weeks.)

-Task (mechanic):

Solve from 10 to 20 abstract problems from: sequences, odd one outs, mini matrices, and minimal logic problems.

at the end of the session, select your confidence rate from 0-100 for feedback advice from your performance.

-Scoring (calibration-weighted):

Feedback as mentioned will vary, example: a percentage of 95 for overconfidence and inaccuracy will result in the threshold

for progression penalty – revert progress to easier difficulty.

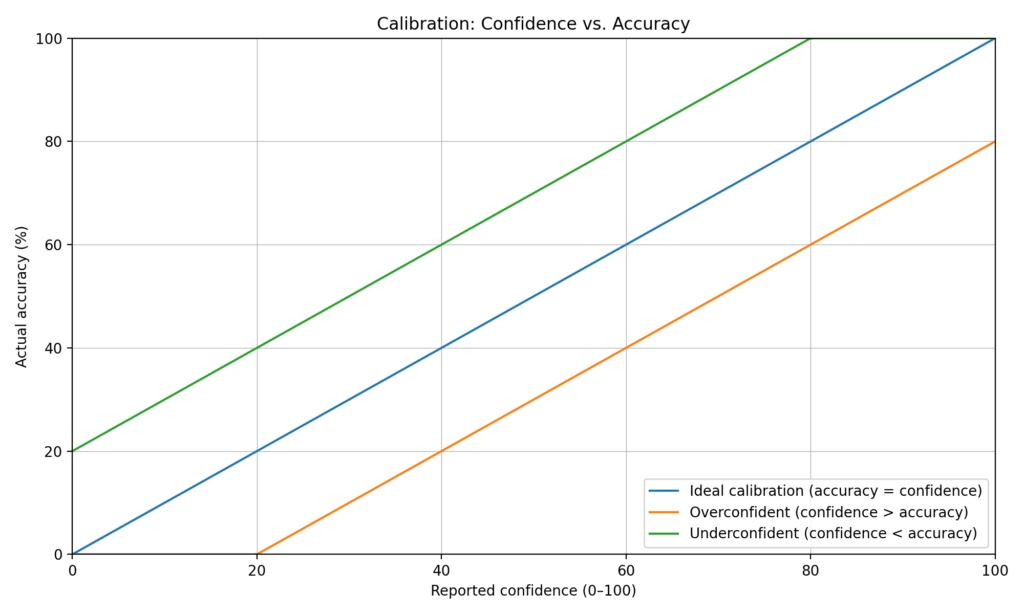

Good vs Bad Calibration (confidence vs accuracy)

X-axis: Confidence (0–100)

Y-axis: Actual accuracy (%)

what does this mean? Good accuracy means high confidence when correct and lower confidence means low accuracy in how they both intertwine. Additionally, bad calibration which contradicts this ethic, shows that high confidence with low accuracy or vice versa shows an imbalance of calibration, when is pursued to be fixed upon exercise.

-Difficulty ladder (Levels 1–10)-

Difficulties ranging from 1 to 10 are based off of time cap, similarity, and ambiguity.

-Progression triggers-

Thresholds:

Accuracy ≥ 85%

Overconfidence rate ≤ 15%

Readiness next day ≥ 8/10

Otherwise hold/regress

-Metrics to log-

≥ 85%

Overconfidence rate ≤ 15%

Readiness next day ≥ 8/10

Otherwise hold/regress

Common failure patterns + fixes

-Abstract-only item pool-

List the 5 types: sequence, odd one out, matrix, minimal logic, and constraint check.

- Next-in-sequence (shapes or numbers)

- Odd-one-out (rule-based classification)

- Matrix completion (2×2 or 3×3 mini-Raven)

- Minimal logic micro-items (True / False / Undetermined)

- Constraint check (valid/invalid under simple rules)

Metacognition is the ability to monitor and regulate your own thinking while solving problems. This module focuses on calibration—how accurately your confidence matches whether you’re actually correct—especially under time pressure. The Calibration Ladder trains you to reduce two major performance failures: overconfidence (committing without verification) and underconfidence (hesitation and unnecessary re-checking). Over time, this improves decision quality in any task that requires fast, accurate judgment—by strengthening when to commit, when to verify, and when to reset.

Transfer targets:

- High pressure fixation video games | geometry dash | Osu | Roblox Obbies : less panic after fails, faster resets, steadier execution under pressure

- Chess: fewer overconfident blunders, better time management, improved verification discipline

- Sudoku: less guessing, stronger “stop-and-reassess” behavior, cleaner logic discipline

Mechanism: better confidence calibration improves decision-making about when to commit vs verify, which reduces errors in high-pressure tasks.

Log Template — Metacognition: Calibration Ladder

Log Template — Metacognition: Calibration Ladder (Abstract-Only)

module_id: META_CALIBRATION_LADDER_ABSTRACT

Log template:

METACOGNITION LADDER

Date: _ | Duration: | Level: | Trials: | Ready: /10 | strain 0/1

Accuracy: /100% | Average time per problem: _S | Mean Confidence:

Calibration errors: | Over confidence: (if: >70) stop | Under confidence: if below 40 – good, else: stop.

Decision: advance | hold | or regress in terms of difficulty.

This all focuses on managing over-under confidence levels to regulate correct mental states when solving for ensuring optimal performance.

Coming soon:

Stroop (Inhibition)

Posner (Attention shifting)

UFOV (Perceptual speed / peripheral vision)